I’ve got a sample dataset about US airplane flights, in a table called flights_data.Ĭonn =psycopg2.

Let’s run a more realistic query than SELECT 1 to demonstrate this. Since most data analytics and data science projects use pandas to crunch data, what we really want is to transform the results of a Redshift query into a pandas DataFrame.

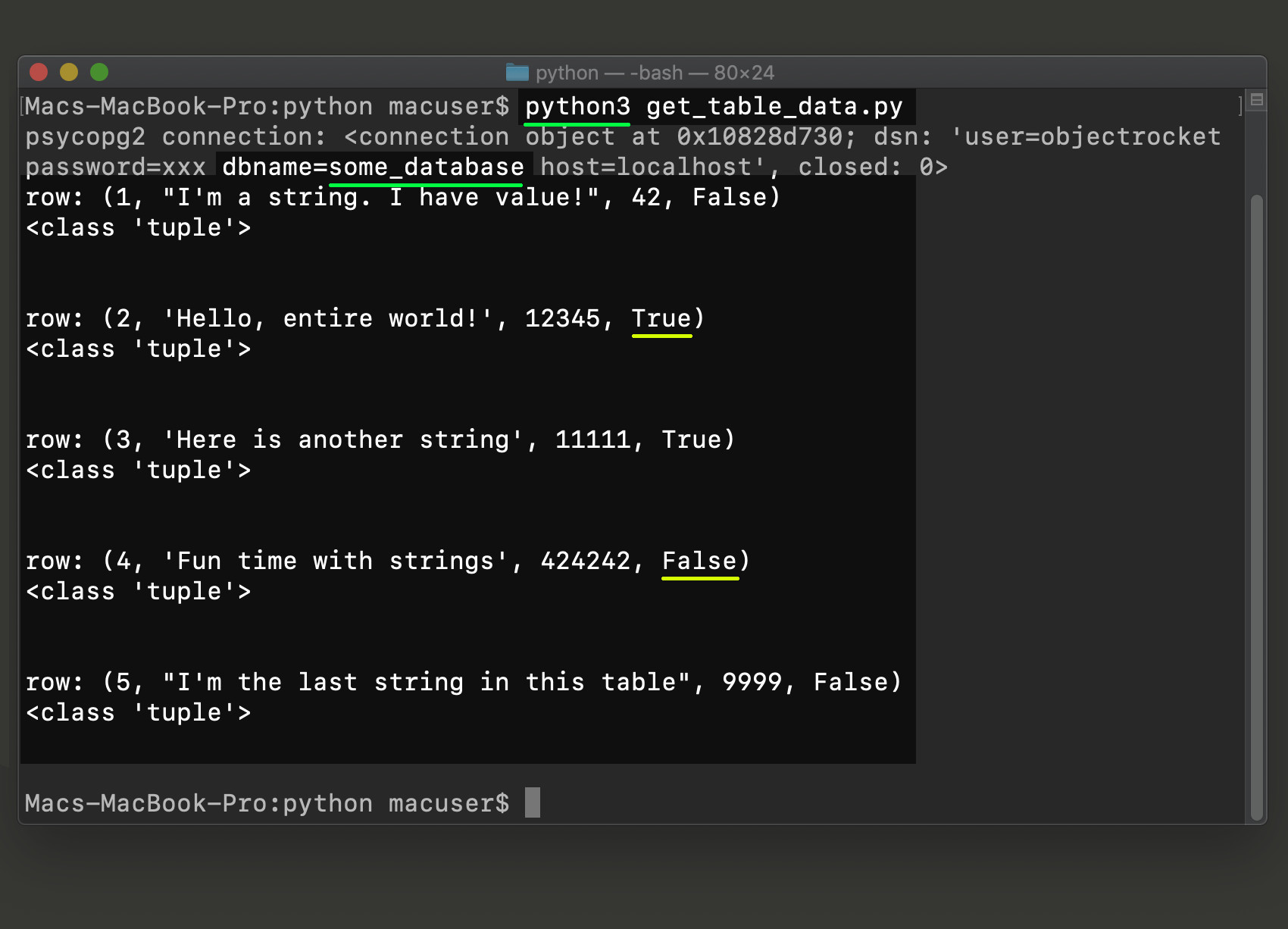

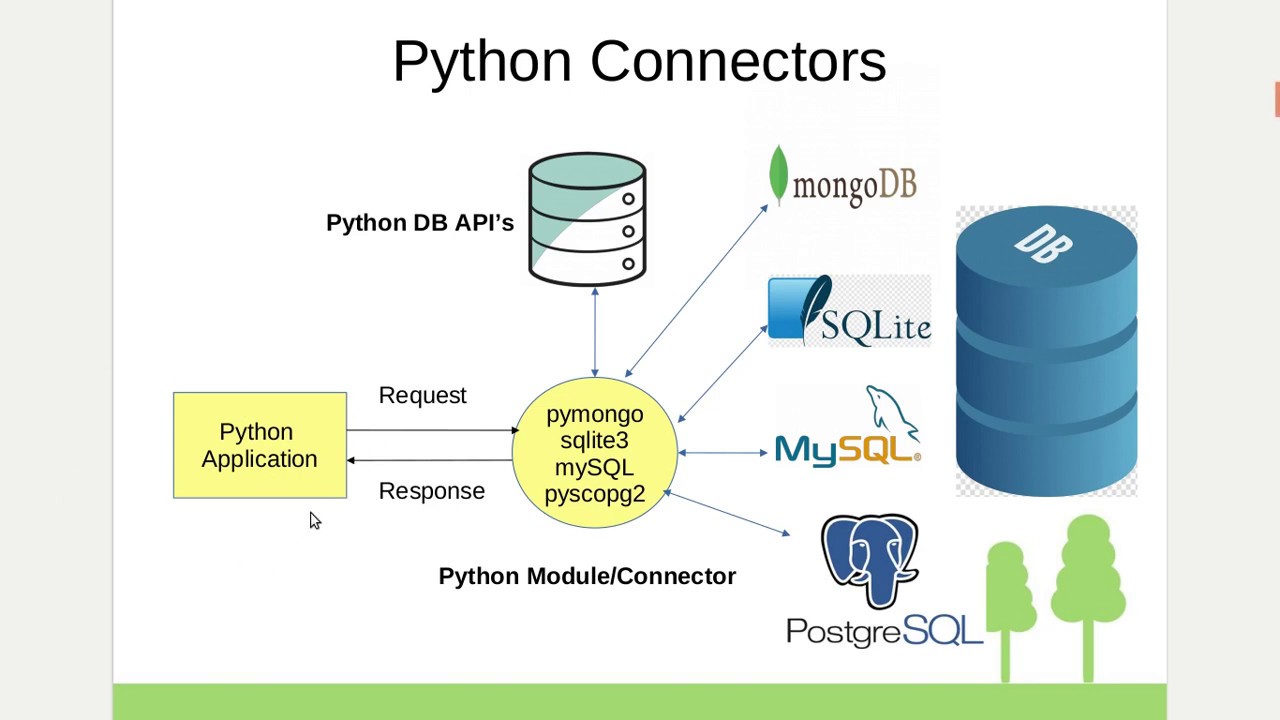

They’re very thorough, but can be a bit hard to understand. So now you've run a query- excellent! But this unstructured fetching and printing of results isn't the best way to work with real data you don’t just want to look at the results printed out, you want to actually do things with them! You could manually replace that print() line with some code that dumps rows into some data structure you can use… or you could use the fetch_dataframe() method to do that automatically!įor more information about the connector, read the official Redshift docs. By default, a new redshift cluster is actually configured to not let anyone access it!** So you might have to set up the security groups to let you in. This most likely means your AWS security groups are blocking access to the redshift instance. Some form of Failed to establish a connection to, Connection refused, or Could not connect to server: Connection timed out. Re-run the cursor = conn.cursor() command to get a new, fresh cursor and then re-run cursor.execute(). You probably wrote a query with a syntax error, and running it broke your cursor state. This means your cursor has crashed, and needs to be recreated. This process can be a little bit finicky, but most errors fall into one of two buckets:Ĭurrent transaction is aborted, commands ignored until end of transaction block. Now let’s actually start getting some data.įirst, we’ll run a simple query just to test that our connection worked properly. This means if a bad actor gets their hands on this code, they still won’t have anything sensitive. Kind of like how you can ‘point’ at things on the screen with your mouse cursor, this virtual cursor acts as a pointer to rows in your database and lets you point at and select them.Īlso, notice how we’re using the os.environ syntax to reference the environment variable containing our password. A cursor is the standard terminology for an object used to actually access records in a database. These commands create a new connection and open a “cursor” to your Redshift data warehouse. There are a ton of other options you can pass to this connect() method, so if you use some exotic configuration for your IAM or Redshift config, check out that page. Your “host” is just the URL of your Redshift instance, accessible from the AWS Redshift console page. □ Not sure where to find this information? If you want to make sure, you can run this Python command in a cell and look for a response that's >= 3.6:

You should be pretty much good to go in this case. You’re using a Jupyter notebook or a Jupyter notebook alternative: There are two likely scenarios here for how you're accessing Python:Ī. If you already know you have a working Python 3.6+ installation, you can skip to the Installing the redshift_connector package section. The packages used in this tutorial require Python 3.6+, and not much else. If you’re running through this live, it should only take around 5-10 minutes to go from start to successful query. Reading data from a Redshift query into a pandas DataFrame Running SQL queries! At this point, you’ve successfully connected to and queried Redshift from Python, and you can write any SQL query you want. Authenticating and connecting to your Redshift data warehouse 3. Installing the Amazon Redshift Python Connector ( redshift_connector) 2. There’s a few different ways to do this, but we’ll cover the recommended method using the official Redshift Python connector. In this post, we'll cover how to do exactly that! You’ll learn all the skills and steps needed to efficiently query data from Redshift right from your local Python environment or a Jupyter notebook. And if you’re at one of those 22.14% of companies that use Redshift, you’re going to need to connect to your Redshift data warehouse from Python. But according to the strange web of maybe-made-up-market-data websites, good old Amazon Redshift is still the king (or at least somewhere in the top 5).Īs you do data science or analysis, you're going to dabble into Python at some point. There’s a lot of new kids on the analytical data warehouse block these days.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed